Advancing Innovation in Dermatology is pleased to make available our collection of scholar articles, industry news, and interviews with the professionals accelerating innovation in skin health and patient care. This content is yet another way beyond our in-person and virtual events to strengthen the community of innovators we aim to build and maintain.

How medical AI devices are evaluated: limitations and recommendations from an analysis of FDA approvals.

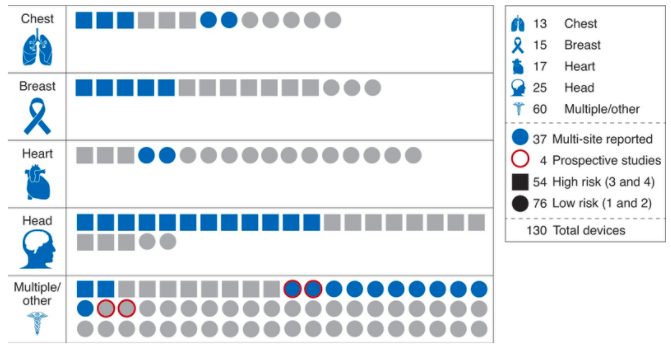

In any nascent field, the development of best practices is critical to ensure the reliability, reproducibility and safety of new devices. A recent analysis by Stanford researchers reviewed the evaluation process for all 130 FDA-approved medical AI devices. The authors found that 126 of 130 such devices underwent only retrospective studies, and that none of the 54 “high-risk” devices were tested in prospective studies. Furthermore, most of the computer-aided diagnostic devices did not include a side-by-side comparison of doctor performance with and without AI, which as the authors note is a critical factor in the devices’ intended use. Most (93/1130) devices did not undergo multi-site assessment, and 59/130 devices did not include a sample size of the studies used. The authors go on to demonstrate in a case study of a pneumothorax detection software that there is substantial variability in device performance when tested across multiple clinical sites. The authors call for more prospective studies of AI-enabled devices that are measured against standard-of-care, as well as increased post-market surveillance in this new field.

Wu E, et al. How medical AI devices are evaluated: limitations and recommendations from an analysis of FDA approvals. Nat Med. 2021 Apr;27(4):582-584.

Figure from Wu et al. 2021. 130 FDA-approved AI medical devices characterized by body site, risk level, and study characteristics

Advancing Innovation in Dermatology Inc. is a nonprofit, tax-exempt charitable organization under Section 501(c)(3) of the Internal Revenue Code.

Donations are tax-deductible as allowed by law. | Privacy Policy | Cookie Policy

© 2025 Advancing Innovation in Dermatology Inc.. All Rights Reserved. | Web Design & Development time4design - Bucks County Web Design.